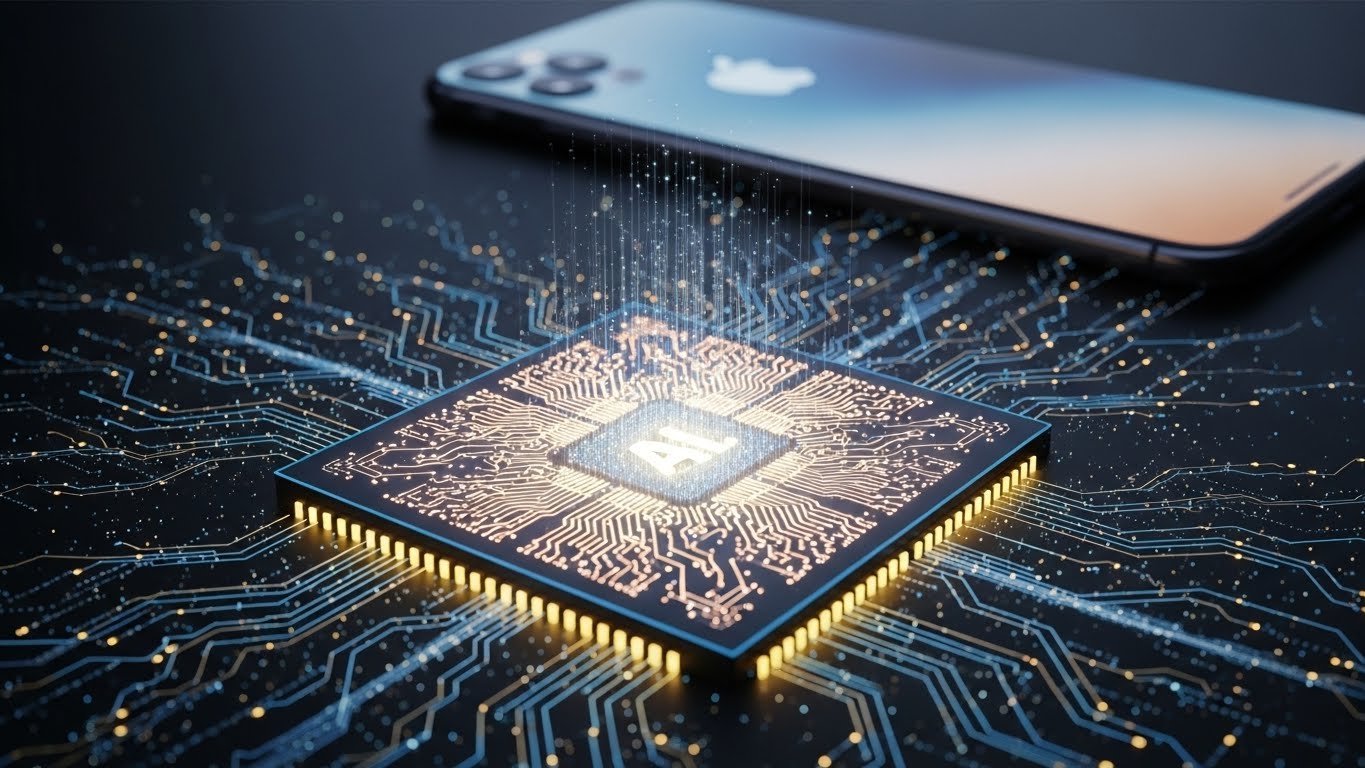

Apple has announced a new generation of AI-focused chip architecture designed to run large language models and advanced generative intelligence entirely on-device. The breakthrough, showcased during a closed-door briefing with developers and industry partners, represents one of the most aggressive moves yet in the battle to dominate next-generation mobile computing. Unlike cloud-dependent AI systems, Apple’s new architecture emphasizes privacy, low-latency processing, and real-time performance, allowing complex AI tasks — from image generation to personal assistants — to run locally on iPhones, iPads, and Macs. Industry analysts say the development could reshape competition across the mobile sector and pressure rival chipmakers to accelerate their own AI roadmaps. Source: Tech industry reporting and analyst briefings — summarized and analyzed by TheDollarPulse.

Key Development

The new chip design integrates a re-engineered Neural Engine capable of running models with tens of billions of parameters without relying on external servers. According to engineers involved in the briefing, the architecture combines unified memory scaling, advanced thermal optimization, and a redesigned computational fabric aimed specifically at high-density AI tasks. Early benchmarks shared confidentially with partners show performance gains of more than 40 percent compared with the current generation. Apple emphasized that the architecture was built with security at its core, ensuring sensitive user data remains on the device. Source: Industry engineering disclosures — summarized and analyzed by TheDollarPulse.

Why It Matters

On-device AI is expected to define the next decade of consumer technology. Running advanced intelligence locally reduces reliance on cloud infrastructure, lowers operational costs, improves privacy, and enables real-time responsiveness — features that are increasingly critical as users adopt generative tools in daily life. Apple’s move positions it strongly against competitors such as Google, Qualcomm, and Samsung, all of which are racing to develop similar capabilities. For developers, the architecture opens the door to new categories of applications that were previously impossible due to hardware constraints. Source: Analyst commentary from AI hardware specialists — summarized and analyzed by TheDollarPulse.

Market and Ecosystem Implications

Tech investors reacted positively to the announcement, anticipating a new hardware upgrade cycle driven by AI-enabled devices. App developers are already exploring opportunities in offline translation, image synthesis, personal productivity agents, and real-time media editing. Meanwhile, chipmakers face mounting pressure to deliver competitive on-device AI performance, potentially reshaping supply chains and accelerating innovation in semiconductor design. Industry observers note that the shift could reduce dependence on cloud-based AI providers, altering revenue models across the broader tech ecosystem. Source: Market analysis from semiconductor and mobile industry experts — summarized and analyzed by TheDollarPulse.

TheDollarPulse Analysis

The key takeaway is that Apple’s AI-on-device breakthrough signals a strategic pivot in the broader tech landscape: the next frontier of artificial intelligence will be personal, local, and hardware-accelerated. This shift has deep implications for privacy, performance, and long-term competitive positioning. While cloud AI will remain essential for large-scale workloads, the migration of generative capabilities to personal devices marks a transformative moment for both consumers and developers. The companies that adapt fastest to this hybrid AI future — integrating on-device intelligence with cloud-scale models — will shape the next era of mobile computing.

Sources

Source: Tech industry reporting, analyst briefings, and semiconductor disclosures — summarized and analyzed by TheDollarPulse.

This article contains original analysis and does not reproduce copyrighted text.